Manual test case generation is laborious and difficult. We want to automate this when possible like we do with Mutation Testing.

Fuzzing

This is a set of automated testing techniques that tries to identify abnormal program behaviours by evaluating how the tested program responds to various inputs. This is a Blackbox Testing method.

We basically want to create random inputs and see if they break things by letting them run for long enough. These are easy to implement and fast and relies on the luck of random input. We may run same things again and again.

Categories

- Generation based: inputs are generated from scratch

- Mutation based: input are made by modifying existing inputs

- Dumb or smart depending on if the fuzzer is aware of the internal structure

- Could be white, grey, or black box, depending on if its aware of the program structure

Goal

- Find real bugs

- Reduce the number of false positives

Mutation Based

- Take existing input

- Randomly modify it (insert or delete a char, etc)

- Pass to program

We can do this with grammars

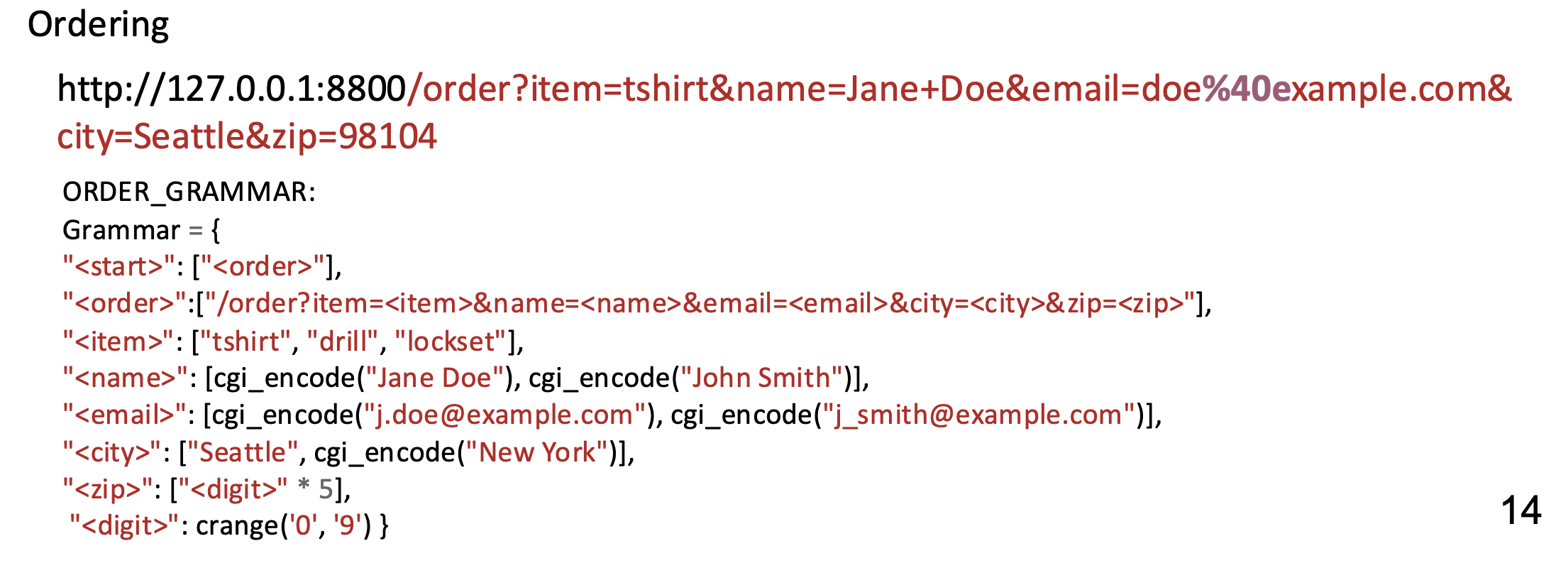

Grammars are the best formalism to formally specify input languages. We place a limit on the number of non-terminals and the total number of expansion steps. These can be seeds in mutation based fuzzing.

Example production

We know that we are testing new behaviour if our code coverage increases.

Greybox Fuzzing

A power schedule distributes the fuzzing time among the seeds in the population. Our objective is to maximize the time spent fuzzing those seeds which lead to higher coverage increase in shorter times. We call the likelihood with which a seed is chosen from the population as the seed’s energy. For instance, assign more energy to seeds that are:

- Shorter

- Execute faster

- Yielding coverage increases more often

- Covering unusual paths

Directed

If we know a spot in the code is more dangerous, we can implement a power schedule to assign more energy to seeds with a low distance to the target function.

- Build a call graph among functions in the program

- For each function executed in the test, calculate its distance to the target function, then do an average distance

- Use the distance to calculate the power schedule You can do more complex calculations by considering finer grained information.

Search Based Fuzzing

Sometimes we are interested in deriving specific test inputs that achieve some objective like reaching specific statements in a program.

- We can’t apply classic search algorithms like BFS and DFS to search for tests because they require us to look at all possible inputs. Domain knowledge helps here.

- We do a kind of graph searching approach where we take an input and then search its neighbours evaluating the distance to some target at each iteration using Hillclimbing

Evolutionary Search

The hillclimbing works well if the neighbourhood is reasonably small otherwise, instead of looking at immediate neighbours, we allow for larger modifications (which becomes a mutation problem). However, this is still pretty costly. See Genetic Algorithms

Challenges in Fuzzing

- Feeding in inputs

- The fuzzing engine will execute the fuzz target many times with different inputs

- It must tolerate any input

- It must not exit

- It could use threads but these need to be joined at the end of the function

- It must be as deterministic as possible

- Must be fast

- Should not modify global state

- The narrower the better

- Detecting abnormal behaviour

- Crashes

- Triggers user provided assertion failures

- Hangs

- Allocates too much memory

- Early crash detection uses sanitizers

- Address sanitizer for memory errors

- Thread sanitizer

- Memory sanitizer

- Undefined behaviour sanitizer

- Leak sanitizer

- Ensuring progress

- We use coverage to do so

- Coming up with interesting inputs

- Generate completely random inputs

- Understand the input type

- Understand the input structure (what a string for example should look like)

- Formal approaches

- Grammar and stuff, useful for structured problems

- Speed

- Avoid paying a penalty for startup time

- Replace costly resources with cheaper ones

- Run many inputs in a single process

- Minimize the number of test cases and their sizes

- Parallelize where possible

Current Limitations

- Lots of false positives

- Focus on code coverage

- Cleaning

- Make the random input more reasonable

- Triage