Logs need timestamps, messages, and attributes. We can also set log levels for filtering. We should be specific with our logs as to not bloat our historical logs with irrelevant information which makes the specific logs we are looking for, harder to find.

What do you log?

- Performance metrics

- Logs should tell you what happened and why

- Timestamp precision depends on the timescale of execution

- Time zones matter (UTC)

- Sorting and searching helps (use structured logs and log aggregation services)

- Redact personally identifiable information

- We need to answer the Who, What, When, Where, Why, How

Types

There are different types of logs:

- Unstructured

- Structured

- Think about who is consuming the log data to its in an agreed upon format

Modern Log Architecture

Back in the day, we used Syslog which forwards logs to an aggregator which is a single place. These held still files and primitive unix tools. This then fed into a search engine which is a single interface to all log data. New developments let you search your logs for exceptions, get information about how the system performs, unique values of any of the fields, and traffic patterns.

Logging Tooling

The goal of the logger in your application code is to:

- Disable certain log statements while enabling others

- Maintain a hierarchy of loggers Loggers use appenders to print logs to multiple destinations. These appenders are inherited additively from the LoggerConfig hierarchy.

Important

The level that will be used is the one that is defined for the logger that was created.

Layouts

CSV, HTML, JSON, Text Pattern, Syslog, MongoDB, CouchDB, Cassandra, Kibana, Elasticsearch.

Ideally, logs should be put in a formatted datastore and the logs are typically formatted according to a pattern which requires ReGex. An example pattern is:

<Pattern>%d %p %c{1.} [%t] %m%n</Pattern>

Where these things correspond to:

- Date

- Level

- Name of logger

- Name of the thread

- The message

- New line Respectively.

How do we construct these logs?

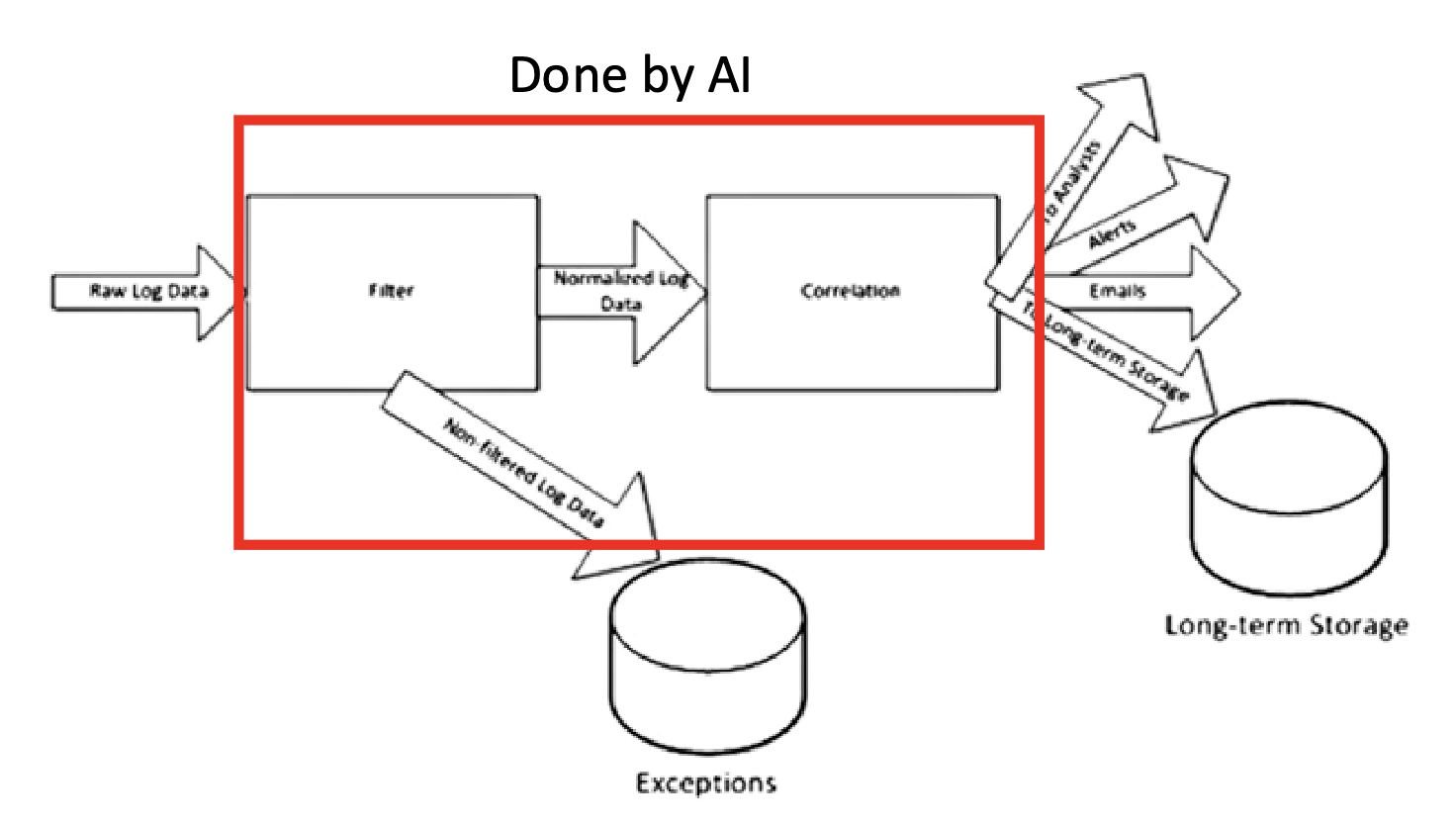

- We start with raw log data

- We filter for log messages that we care about and drop others to reduce system load

- We map its various elements to a common format

- We correlate and flag groups of individually unimportant messages

- We can take action on the logs

Note

We can implement “rule correction” on our logs which tells our system to do something when we see a certain event.

We can do some filtering, normalization, correlation, etc using AI.