The main idea is that some users see version A and other see version B and we collect analytics on both versions to gauge user behaviour and see which version performs better.

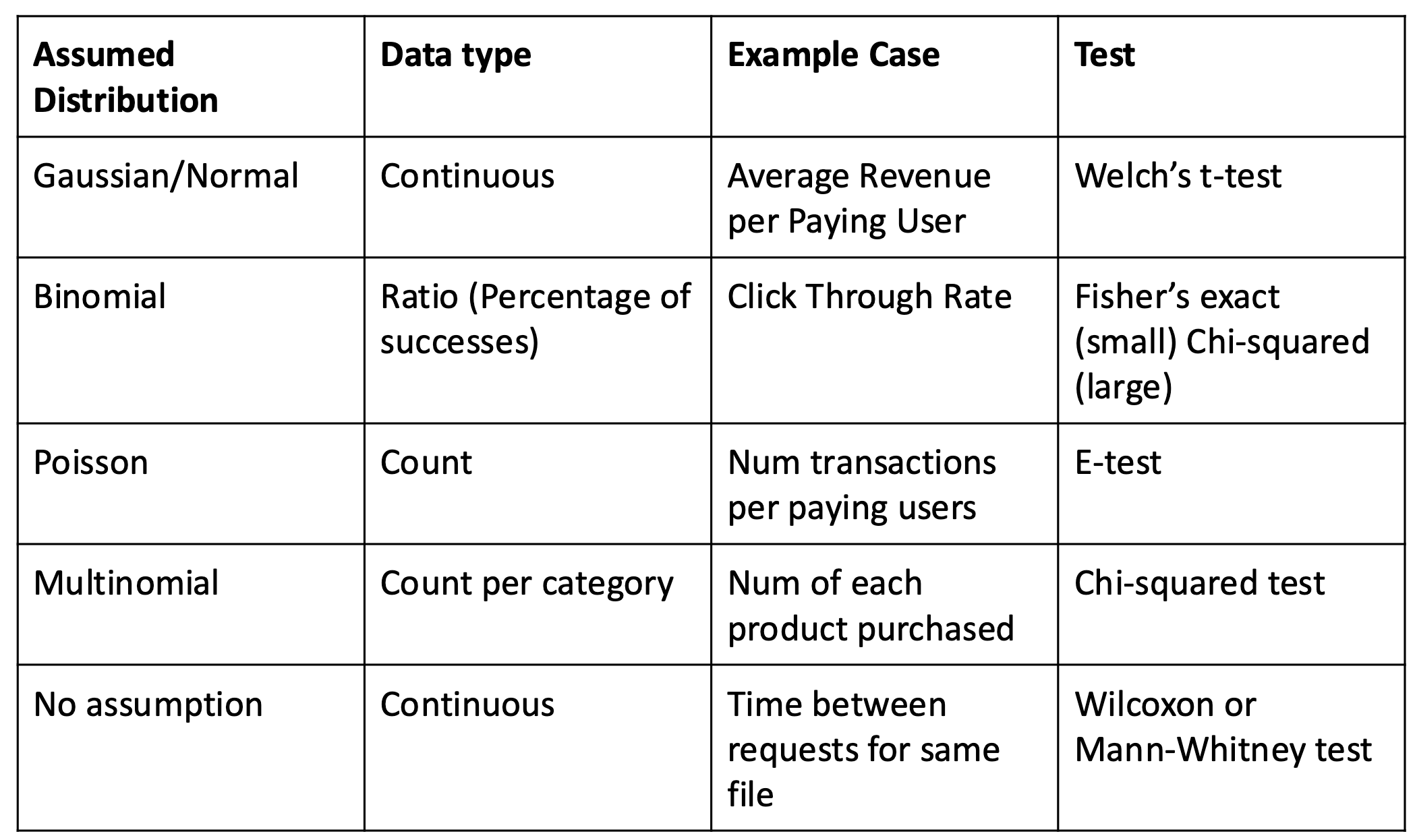

We combine A/B testing with statistical Hypothesis Testing.

We can use randomized trials to assign features per user. We do this with a random number generator. Note that this does not work if there is a systematic bias. Also note that you need a statistically significant sample per condition. You do not need to send the same number of users to use a statistical test. You may want to do a correction if you are testing multiple hypothesis.

Ramping Up

- Start with 1% of users seeing the new feature

- Once you’ve collected enough samples for the new feature, run a statistical test to see the effects of the new feature.

- We can measure things like clicks, sales, etc to gauge performance

- We can measure things like clicks, sales, etc to gauge performance

Things to Measure

- Scroll prediction (predict finger future position during scrolls allowing time to render the frame before the finger gets there)

- Single click autofill (Suggest autofill fields, the idea here is that users will fill out forms in less time)

- Enable PiP (With PiP enabled, 2% of users will use it)